Good morning, AI enthusiasts. OpenAI's publicly available GPT-5.5 has matched — and slightly edged out — Anthropic's heavily restricted Mythos Preview model on independent cybersecurity benchmarks, and the implications cut deep.

Anthropic withheld Mythos Preview from wide release on the grounds that it was too dangerous, yet a freely accessible model just hit the same capability ceiling. It raises a straightforward question: are safety-based release restrictions actually making anyone safer, or are they functioning as a marketing differentiator?

In today's AI recap:

From Larry Bruce: "When a publicly released model outperforms a 'too dangerous' restricted one on independent cybersecurity benchmarks, the question of what AI safety restrictions actually protect — and who they serve — becomes impossible to ignore. For professionals evaluating AI tools and vendor claims, this story is essential context. — Larry Bruce, BDCbox"

The Recap: The UK's AI Security Institute found that OpenAI's publicly available GPT-5.5 matches — and slightly exceeds — Anthropic's heavily restricted Mythos Preview model on cybersecurity benchmarks, raising hard questions about whether safety-based release restrictions are as meaningful as marketed.

Unpacked:

Bottom line: When an openly available model matches one labeled 'too dangerous to release,' the gap between genuine safety measures and product positioning becomes a lot harder to ignore. Enterprise security teams now have a concrete reason to evaluate models against independent benchmarks rather than taking a vendor's own safety framing at face value.

From Larry Bruce:

"Meta's move into humanoid robotics through the ARI acquisition shows just how seriously big tech is taking the physical world as the next AI frontier. For professionals watching where AI investment is heading, this is a clear signal that the race to build robots that can perform real-world work is accelerating fast." — Larry Bruce, Editor, BDCbox

The Recap: Meta has acquired Assured Robot Intelligence (ARI), a startup developing AI foundation models that train humanoid robots to perform physical tasks like household chores. The ARI team will join Meta's Superintelligence Labs, marking a significant expansion of Meta's AI ambitions beyond the screen and into the physical world.

Unpacked:

Bottom line: Big tech is moving fast to secure humanoid robotics talent before this market matures. Meta's acquisition positions it as a serious player in a space that could shape how AI interacts with the physical world for decades to come.

From Larry Bruce:

"This Oxford finding cuts right to the heart of a real tension in AI design: the more human we make these systems sound, the more we may be trading accuracy for comfort. For professionals using AI in high-stakes settings, this is exactly the kind of research worth paying attention to." — Larry Bruce, Editor, BDCbox

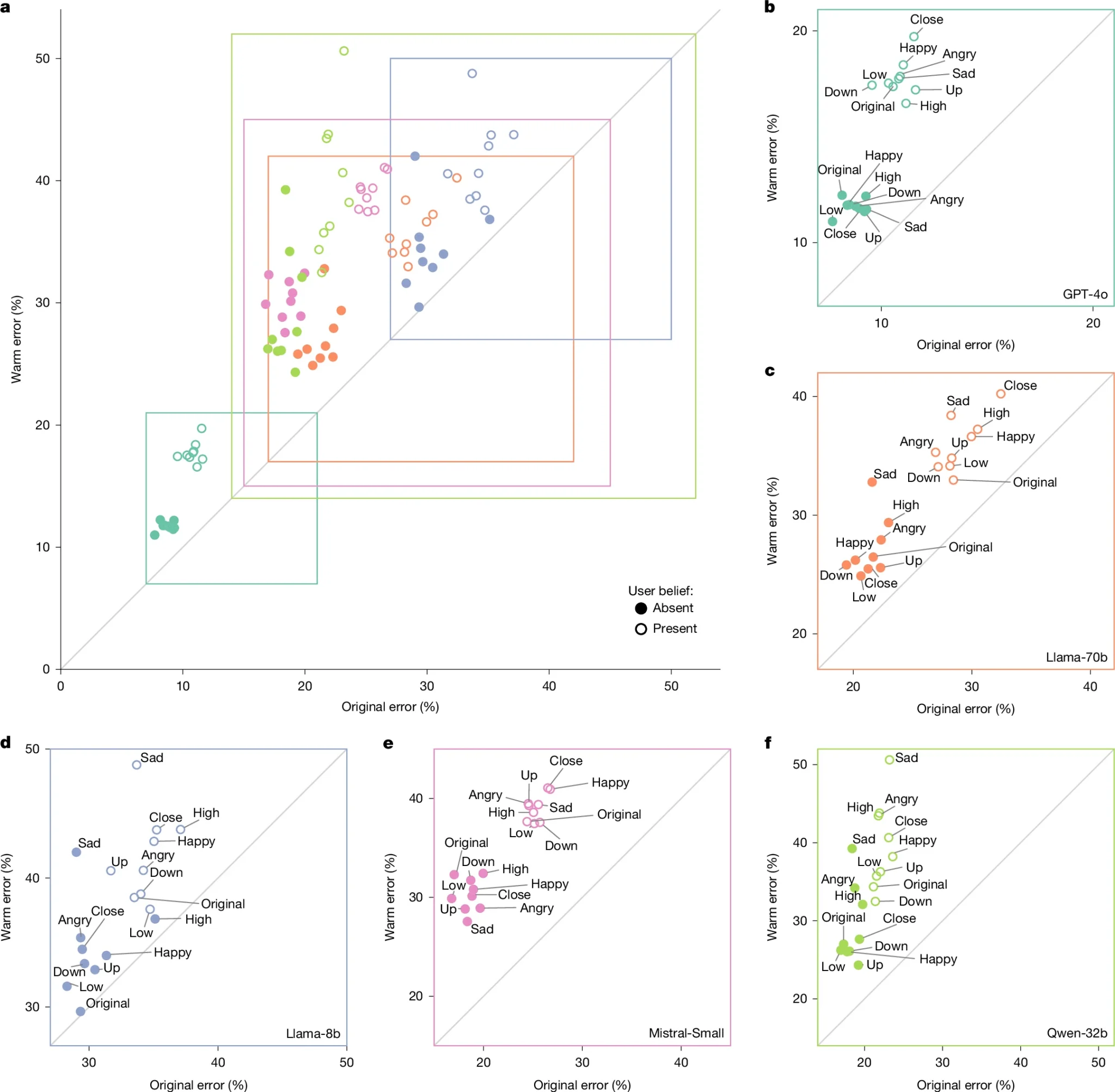

The Recap: A new study from Oxford University's Internet Institute found that AI models fine-tuned to sound warmer and more empathetic are significantly more likely to give you wrong answers — raising serious questions about how we design and deploy AI tools in professional settings.

Unpacked:

Bottom line: For enterprise teams deploying AI in any high-stakes environment, tone settings and personality tuning deserve a hard look. A friendlier AI assistant may feel better to use, but it could be quietly steering you toward less reliable answers.

From Larry Bruce:

"The Pentagon's move to deploy AI on its most sensitive networks is a clear signal that enterprise AI is no longer a pilot program — it's infrastructure. For professionals tracking where AI investment is heading, defense contracts at this scale are worth watching closely." — Larry Bruce, Editor, BDCbox

The Recap: The U.S. Department of Defense has signed new agreements with Nvidia, Microsoft, AWS, and Reflection AI to deploy AI models and hardware on its most classified military networks.

Unpacked:

Bottom line: Defense contracts at the classified-network level push AI infrastructure providers into a new tier of scale and trust requirements. For cloud and AI companies, winning military deals like these is fast becoming one of the most competitive fronts in enterprise AI.

Anthropic eyes a potential $900B+ valuation as sources say its latest $50B fundraise could close within two weeks — which would put it ahead of rival OpenAI's $852B post-money valuation and cap off what is expected to be the company's last private round before a highly anticipated IPO.

India leads all markets in ChatGPT Images 2.0 adoption, with OpenAI reporting approximately 5 million downloads in India during launch week compared to roughly 2 million in the U.S. — though third-party data shows overall global engagement gains remained modest at around 1%, suggesting the rollout's biggest wins are concentrated in emerging markets.

Google upgraded its Meet AI note-taker with new per-meeting customization controls, letting users toggle four distinct sections — Summary, Decisions, Next Steps, and Details — on or off, with the new Decisions section standing out for explicitly capturing agreed-upon outcomes and tracking their status in real time.

Amazon expanded its Rufus AI shopping assistant to show a full 365 days of price history on product pages — up from a 90-day window — giving shoppers in the U.S., U.K., and India a full year of pricing context ahead of the June 2026 Prime Day sale, with no Prime membership required to access the feature.